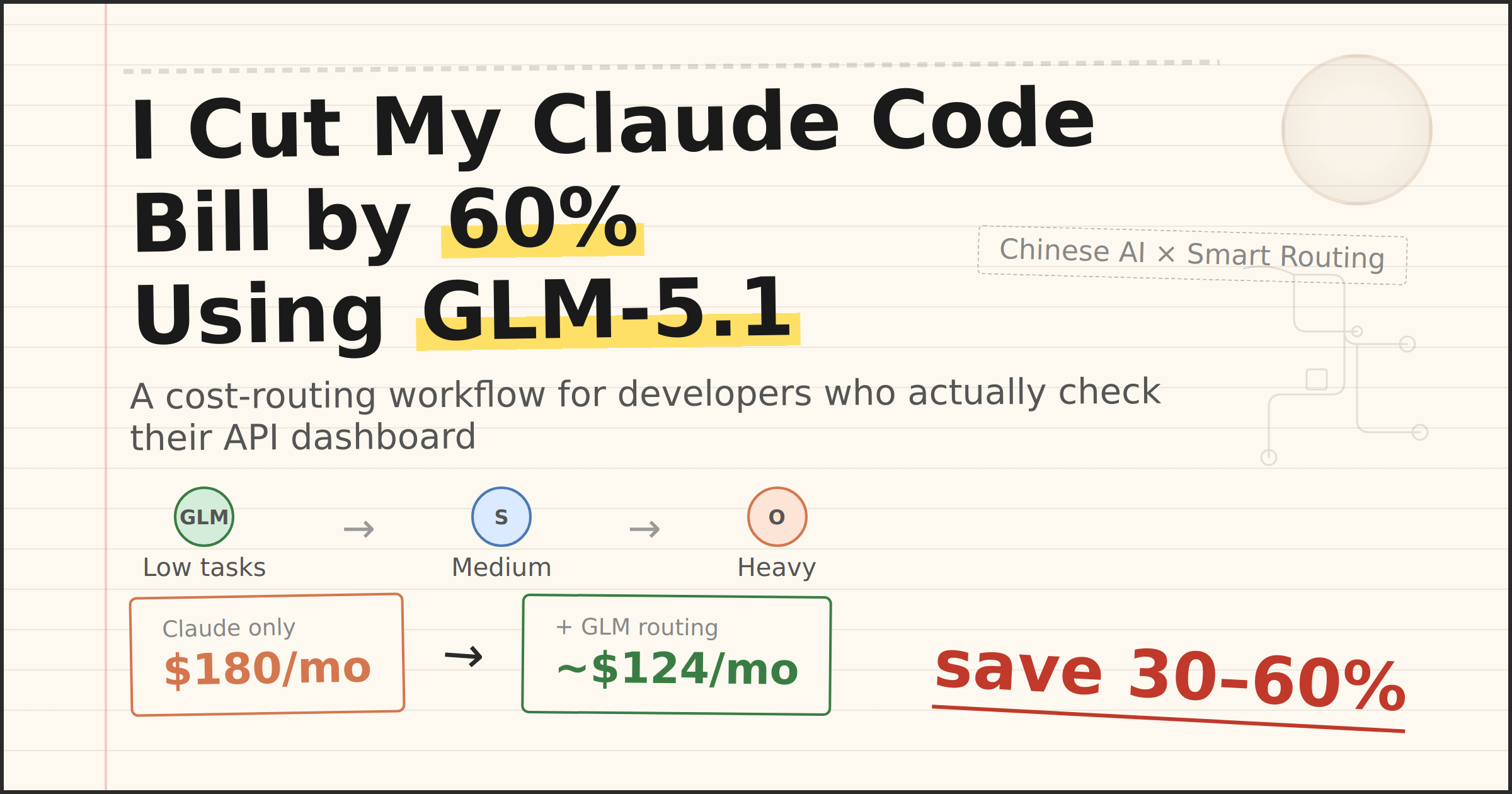

I Cut My Claude Code API Bill by 60% Using a Chinese AI Model You've Never Heard Of

If you use Claude Code seriously — not the occasional "fix this function" but hours-long agentic sessions across multi-file projects — you already know the feeling. You check your Anthropic dashboard, see three digits you weren't expecting, and wonder whether you're building software or funding a small research lab.

Claude Opus 4.6 runs $5 per million input tokens and $25 per million output tokens. Sonnet 4.6 is cheaper at $3/$15, but extended thinking and tool use still generate substantial token volumes during real coding sessions. One developer publicly tracked 10 billion tokens over eight months of heavy Claude Code usage — the API equivalent would have exceeded $15,000.

There has to be a middle layer. And I found mine in an unexpected place: Zhipu AI's GLM-5.1, released on March 27, 2026.

What GLM-5.1 Actually Is

GLM-5.1 is the post-trained iteration of GLM-5, Zhipu AI's (now branded Z.ai) 744-billion-parameter mixture-of-experts model. Only 40 billion parameters activate per inference call, which keeps latency and cost manageable despite the massive total parameter count. The model supports a 200K context window and up to 131,072 output tokens per response.

What makes GLM-5.1 notable is its coding score. In an evaluation conducted using Claude Code as the testing harness, GLM-5.1 scored 45.3 — representing 94.6% of Claude Opus 4.6's score of 47.9. That is a 28% improvement over the base GLM-5, which scored 35.4 on the same benchmark. The benchmarks are self-reported by Z.ai and have not been independently verified, so treat the exact numbers with appropriate caution. But in my own testing, the model handles straightforward coding tasks competently.

The pricing tells the real story. The standalone GLM-5 API is priced at $1.00 per million input tokens and $3.20 per million output tokens — roughly 5x cheaper on input and nearly 8x cheaper on output compared to Claude Opus 4.6. Z.ai also offers a subscription-based GLM Coding Plan starting at $3/month for 120 prompts, with a Pro tier at $15/month for 600 prompts. The Coding Plan is directly compatible with Claude Code — you change the API endpoint, not the tool.

Two additional details matter. GLM-5.1 was trained entirely on Huawei Ascend 910B chips with zero NVIDIA hardware, a fact that carries geopolitical significance given Zhipu's placement on the US Entity List since January 2025. And the GLM-5 weights are already open-source under MIT license, with Z.ai confirming GLM-5.1 will follow suit.

The Workflow: Route by Complexity, Not by Habit

The idea is simple and not original — Anthropic's own documentation recommends tiered model routing. But most developers think about routing within the Anthropic ecosystem: Haiku for classification, Sonnet for standard work, Opus for hard reasoning. Adding GLM-5.1 as an external tier below Sonnet creates a genuinely different cost curve.

My routing logic divides tasks into three bins. Low-complexity tasks — boilerplate generation, file scaffolding, docstring writing, simple refactors, test stub creation — go to GLM-5.1 via the Z.ai API. Medium-complexity tasks — debugging with context, multi-file edits requiring coherent reasoning, code review — stay on Claude Sonnet 4.6. High-complexity tasks — architecture decisions, subtle bug hunts across large codebases, anything requiring deep chain-of-thought reasoning — go to Claude Opus 4.6 with extended thinking enabled.

In practice, 50–60% of my Claude Code interactions fall into the low-complexity category. These are the tasks where you don't need frontier-level reasoning; you need a model that follows instructions reliably and writes syntactically correct code.

A Minimal Task Router

Here is a simplified version of the routing logic I use. In production, you would wrap this around your actual API calls and add error handling, but the core decision tree is this straightforward:

from enum import Enum

class Complexity(Enum):

LOW = "low"

MEDIUM = "medium"

HIGH = "high"

LOW_KEYWORDS = {"scaffold", "boilerplate", "stub", "docstring",

"rename", "format", "simple test", "template"}

def classify_task(prompt: str) -> Complexity:

prompt_lower = prompt.lower()

if any(kw in prompt_lower for kw in LOW_KEYWORDS):

return Complexity.LOW

if len(prompt) > 2000 or "debug" in prompt_lower or "refactor" in prompt_lower:

return Complexity.MEDIUM

return Complexity.LOW # default cheap

def get_endpoint(complexity: Complexity) -> dict:

routes = {

Complexity.LOW: {

"base_url": "https://open.bigmodel.cn/api/paas/v4",

"model": "glm-5",

"cost_per_mtok_in": 1.00,

"cost_per_mtok_out": 3.20,

},

Complexity.MEDIUM: {

"base_url": "https://api.anthropic.com/v1",

"model": "claude-sonnet-4-6-20260220",

"cost_per_mtok_in": 3.00,

"cost_per_mtok_out": 15.00,

},

Complexity.HIGH: {

"base_url": "https://api.anthropic.com/v1",

"model": "claude-opus-4-6-20260205",

"cost_per_mtok_in": 5.00,

"cost_per_mtok_out": 25.00,

},

}

return routes[complexity]

The Z.ai API supports OpenAI-compatible request formats, so switching between endpoints requires minimal code changes. If you are using the GLM Coding Plan subscription instead of the raw API, you can point Claude Code directly at the Z.ai endpoint.

The Cost Math

Assume a monthly workload of 20 million input tokens and 8 million output tokens — a realistic figure for someone using Claude Code daily for professional development work.

Running everything through Sonnet 4.6 would cost (20 × $3) + (8 × $15) = $180/month.

With a 55/30/15 split routing low tasks to GLM-5.1, medium to Sonnet, and high to Opus, the breakdown looks like this: GLM-5.1 handles 11M input / 4.4M output at $11 + $14.08 = $25.08. Sonnet handles 6M input / 2.4M output at $18 + $36 = $54. Opus handles 3M input / 1.2M output at $15 + $30 = $45. The total is roughly $124/month — a 31% reduction. If your low-complexity share is higher (which mine is), savings approach 40–60%.

Factor in Anthropic's prompt caching on the Sonnet and Opus portions, and the effective cost drops further.

Where GLM-5.1 Falls Short

This is not a drop-in replacement for Claude. Several limitations are worth flagging.

Context window is 200K versus Claude's 1M tokens. For large codebase analysis, GLM-5.1 simply cannot hold enough context. Reasoning depth on ambiguous, multi-step problems remains measurably below Claude Opus. The self-reported benchmarks reflect coding performance specifically; general reasoning and instruction following have not been independently evaluated at the same level. Latency can be inconsistent — Z.ai experienced capacity issues in late February 2026, and while the subscription model is more predictable, peak-hour throttling has been reported. Finally, the model's strongest performance is in English and Chinese; if your codebase involves extensive natural-language processing in other languages, test carefully.

The benchmarks being self-reported is a recurring caveat. Until independent evaluations confirm GLM-5.1's numbers, treat the 94.6% figure as a marketing claim. My own experience suggests it is directionally correct for routine coding tasks, but the gap widens on anything requiring nuanced reasoning.

Who This Is For

This workflow makes sense for independent developers and small teams who pay for Claude Code via API and feel the cost pressure month-to-month. It is particularly well-suited to anyone already comfortable with Chinese AI tools or willing to create an account on Z.ai's platform.

It does not make sense if you are on a Claude Max plan ($100–200/month) that already bundles unlimited usage, or if your work is almost entirely high-complexity reasoning where Claude Opus is genuinely the right tool for every task.

The broader point is strategic. The Chinese AI ecosystem is producing models that are cost-competitive and increasingly capable. Ignoring that ecosystem because the tools are unfamiliar or the documentation is partially in Mandarin is leaving money on the table. GLM-5.1 is not the last model that will make this kind of routing worthwhile — it is one of the first.

Oliver Wood writes about AI tools, behavioral economics, and the Chinese AI ecosystem. Follow on Medium @oliver_wood or on X.

コメント